This blog post is part of a series based on the paper “Ten simple rules for large-scale data processing” by Arkarachai Fungtammasan et al. (PLOS Computational Biology, 2022). Each installment reviews one of the rules proposed by the authors and illustrates how it can be applied when running workflows in Terra. In this installment, we take a look at version control across a range of components including tools, dependencies, workflow scripts and data resources.

Version control is one of those technical concepts that’s obviously a good idea yet can be really tricky to do correctly. And as much as it has become an established practice for most computational scientists, many tend to underestimate the scope of what should be version-controlled.

(If you’ve never heard of version control or would like a refresher tailored for scientists, check out the Software Carpentry lesson on version control with Git.)

In this sixth rule, Arkarachai Fungtammasan and colleagues rightly emphasize that it’s not enough to control the version of the main software code and tools involved in analysis:

“Applying version control to all code is always recommended for reproducible research. In the context of a large-scale data analysis, we need to go beyond this initial step […].”

Indeed, the tools researchers use directly — those that feature most obviously in command lines and in scripts — typically rely on other, less visible components, or dependencies. Changes to those dependencies can affect analysis results, so it’s important to ensure specific versions are used rather than “the latest available”.

The authors also call out workflow scripts and data resources such as genome builds as components that should be carefully version-controlled.

Version-controlled workflows in Terra

Terra’s workflow execution system is designed specifically to enable version control at multiple levels, with minimal effort on the part of pipeline developers and end users.

Workflow scripts

“When multiple processing steps are combined into workflow (Rule 4), the workflow itself should be versioned.”

The WDL workflow scripts used in Terra are held in version controlled repositories — either the built-in Broad Methods Repository, which supports version control through the concept of “snapshots”, or the external Dockstore repository, which offers workflow-specific versioning features backed by the industry-standard code versioning of GitHub.

Tools, dependencies and computing environment

“To guard against interruptions, all dependencies should be pinned to a specific version (ideally through a version control hash or equivalent) that has been thoroughly tested (Rule 5). […] Utilizing container technology is highly encouraged, if allowed in the system, to guarantee the computing environment for processing.”

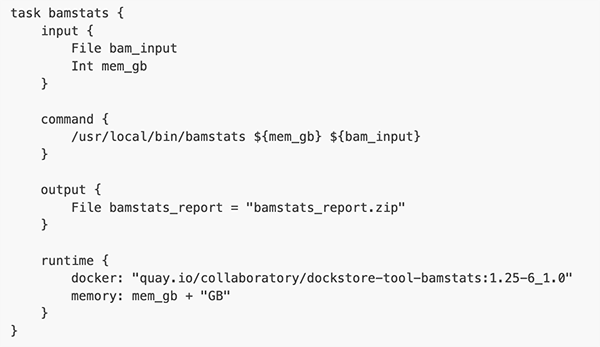

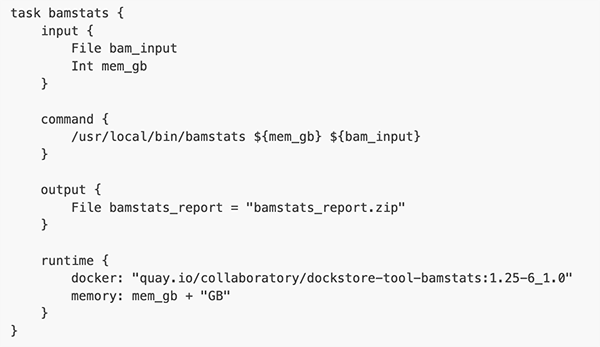

Within a WDL workflow, each individual analysis task specifies a Docker container that encapsulates all software tools and dependencies involved. Workflow developers and users can specify the exact version of the container using a unique identifier that ensures absolute reproducibility of the computing environment.

Data resources

“In biomedical data analysis, there are often components beyond the software and related dependencies that need to be included in the reproducibility plan. For instance, if the data processing relies on a genome build, using the most recent build and release in the pipeline will be insufficient. Instead, the processing needs to be tied to a specific build and release, much like the dependencies in the pipeline.”

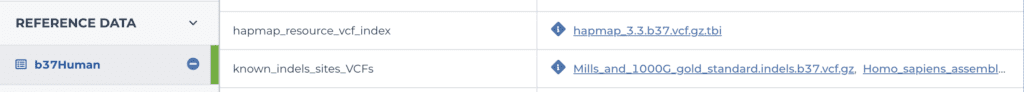

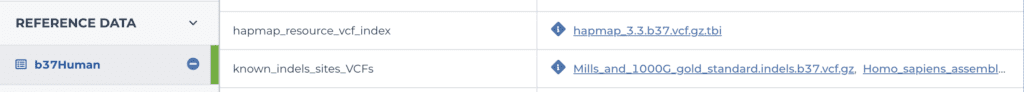

Terra workspaces provide data management features that include data manifests (see “data tables”), the ability to load versioned genome reference builds, and a system of key-value pairs that can be used to specify and label custom data resources for use in workflow configurations.

It’s worth noting that the workflow logging system also provides features that support version control by making it possible to go back and look at configuration details for past analyses, as we touched on in Rule 2 (Document Everything).

To learn more about making effective use of version control for large-scale data processing in Terra, please read “How does pipeline versioning work?” in the Terra knowledge base.